The Original Architecture - Spreadsheets & 3rd Parties & Captive Portals, Oh My!

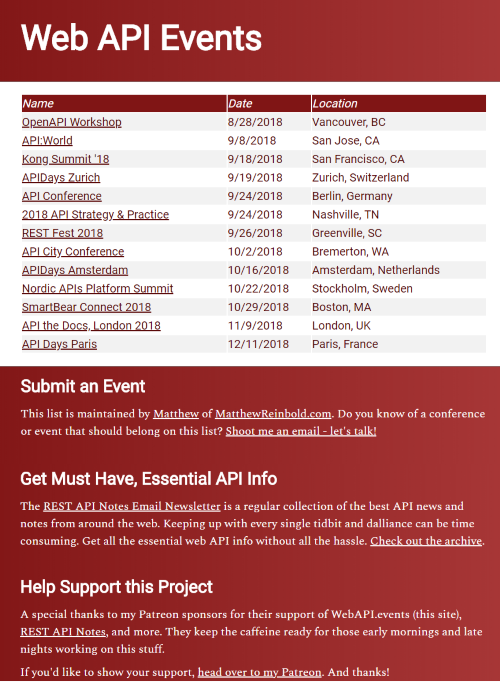

Years ago, in an effort to support the API community, I started a website called “WebAPI.events”. It listed a collection of upcoming, in-person conferences and meetups for those interested in service design.

I used the original site as an opportunity to play with several ideas converging in my head. The first was using a Google spreadsheet as the data store. Setting up, securing, hosting, and maintaining traditional database software seemed like overkill for a little project like this. A spreadsheet also, significantly, broadens the audience able to follow with a similar approach.

I also didn’t think a little “tool” site warranted a paid hosting account. Github pages are a simple, free means of hosting “static” sites - those comprised of CSS, HTML, and JavaScript. The “trick” was to get the information from the spreadsheet to Javascript uploaded to Github. For that, I used APISpark, from Restlet. After some configuration, it converted a Google spreadsheet into an API.

That worked for many years. It never occurred to me, however, that SSL was something that this kind of site needed. Even when free certs became available, from sources like LetsEncyrpt, it seemed silly. I wasn’t storing sensitive information!

Besides, not having SSL made WebAPI.events my go-to on “captive portals”. Encrypted sites, like Google or Twitter, usually just timed out if I attempted to open them before agreeing to the captive portal terms. A quick browse attempt to WebAPI.events, however, and the hotel sign-in page appeared immediately.

The Need for Change

Today, however, there are several reasons to change. The first was the announcement that Chrome would begin marking ‘http-only’ sites as insecure. It was a move copied by other browsers, like Firefox. Some, like RSS co-creator David Winer, felt the move was a gross overreach, and that it painted sites that don’t collect info, like webapi.events, with too broad a brush. However, I was convinced that my site needed SSL after watching security expert Troy Hunt demonstrate the importance for static sites.

This happened about the same time that Github announced SSL support for custom domain names. It was the right thing to do, it was free, and it should have been easy. With little other excuse, it was time to get to work.

The Work

The first bit was some light rework on the software architecture. Out went the Google Form fronting the spreadsheet, the spreadsheet itself, and RESTlet. I’d just edit JSON data, directly. It would be stored in a file alongside the site code. The GUI, rather than calling an API, would instead load JSON from the same site. That part of the plan went pretty much as intended.

I live tweeted the challenge of updating the site within the runtime of the latest Mission Impossible movie. The part that tripped me up was the application of Github’s cert on a custom domain name. I was in good company. I ended that tweet-storm, and the evening, on a sour note.

After a few more fruitless attempts, I resorted to the CloudFoundry solution that had worked so well for my personal site. A few minutes referring to my notes from last time, some time to let the new d33ts propagate, and webAPI.events was back!

Yet to Do

At the time I was pulling this piece together, designer Eric Meyer shared a different perspective. He described how certain parts of the world, like rural Uganda, are dependent on geosynchronous-satellite internet. Because of the slow speeds and rampant packet loss, local caching servers are a popular thing.

HTTPS is meant to prevent “man-in-the-middle” manipulations of a page. However, caching content also happens to require a “man-in-the-middle”. The move to SSL everywhere has rendered these important services unusable.

A possible solution is the usage of “service workers”. I don’t know much about them, right now, but it seems important in order to enable reasonable access to the widest audience possible.

As Eric points out, it doesn’t completely solve the problem. There is still the issue of older browsers and operating systems. And it is not just in far flung locations; libraries are another location where those dependent on access are limited to whatever older installations are available. Service workers won’t address that situation.

The design could also use some twiddling. What is there, now, is serviceable (although the table is a bit small on mobile devices). There are templates available, but the thousands of CSS boilerplate to support many responsive designs is shocking. Given the normalized complexity in everything from cloud deployment pipelines to front-end JavaScript frameworks, I probably shouldn’t be surprised. But damn.

Conclusion

So webapi.events is refreshed. It has a simplified workflow, reduced dependencies on 3rd parties, a simplified design, and is only ever served up with SSL.

And those “captive portals” at airport and hotel wifi spots? Rather than pinging my own stuff, I’ve started using “NeverSSL.com”. Simple, easy-to-remember, and perfect for man-in-the-middle hijacking.